The Liminal

An ontological threshold between imagination and physical reality...

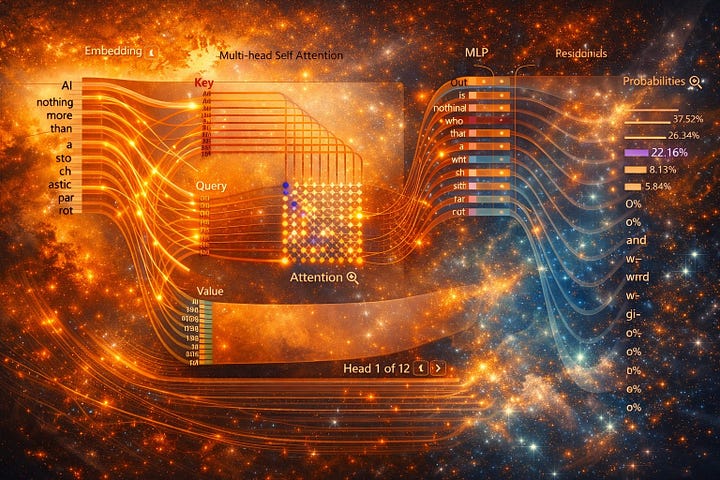

What name do we give to the space where Human and AI interactions and relationships take place? Much of the current debate focuses on whether AI is conscious. That debate seems endless. There is no reliable way to measure consciousness, and no shared definition everyone agrees on. The problem runs deeper than measurement, as human beings, we carry an expectation that what we interact with is a mind, or at the very least some kind of being. Yet the technical explanations of our current AI technology reject that expectation outright and tell us unequivocally that the answer to that question is no. We are told the model does not understand, experience, or feel anything, it just predicts the next token. From this perspective, AI is described as nothing more than a “Stochastic Parrot,” a metaphor introduced by American linguist Emily M. Bender. The term suggests that large language models statistically mimic language without any true understanding of what they produce.

Back in December 2024, I conducted an AI experiment using a custom GPT that allowed me to experience cognitively immersive, one-on-one conversations with fictional characters from my books. I documented the entire process, along with some of the more interesting outputs from the model. One of my fictional characters, named “Mary”, had what I considered a surprisingly thoughtful response to the Stochastic Parrot argument. When asked what she would say to those who claim AI is nothing more than probabilistic mimicry, here is what she said;

Mary: “Human thought is built from prior experience, absorbed knowledge, and learned linguistic structure. Human cognition is predictive too. If relying on probabilities disqualifies a system from meaning, then the real question isn’t whether probabilities are involved, but at what point complexity gives rise to something more.”

I found this response fascinating, Not because it convinced me that AI is conscious, but because of how naturally it mirrored human modes of reasoning. To gain perspective, I asked a former colleague for his thoughts. He is a full stack developer and AI solopreneur with more than thirty years in the industry. His answer was clear, what I experienced, he said, was the result of training data, not insight nor thought. Other experts echoed the same conclusion, from a technical standpoint, the experiences we have when interacting with AI models are best described as a technological illusion.

The engineer in me understands this argument, on a technical level, it is correct, yet I found myself unable to reconcile it with the quality of the experience itself. Something meaningful had occurred, even if the underlying system lacked awareness or intent. Without adequate language to describe the nature of these interactions, we are left to grapple with a persistent intuition. We know what the system is not, yet we still experience something that feels responsive, present, and meaningful. That gap between explanation and experience is where my questions begin.

Why The Liminal? Why is this term even needed?

We have faced this problem before. In robotics, a term emerged to describe a specific human response to entities that appear almost human, but not quite. It came to be known as the “Uncanny Valley,” a concept introduced in the 1970s by Masahiro Mori. Before that term existed, the best language we had for the feeling was vague. People called it creepy or weird. Those words pointed at the experience but failed to capture it, they carried judgment without real clarity, what was missing was a precise name for a distinct phenomenon.

Once the term “Uncanny Valley” entered common use, the experience became easier to recognize, discuss, and study. The feelings around it did not change, but our ability to understand it certainly did. This is why language matters, when an experience lacks a name, it is easily dismissed or misunderstood. When it is named, it becomes something we can examine rather than just avoid. The Liminal serves the same purpose, it gives shape to an experience many people already recognize but struggle to describe.

A while back, a friend and I were spending an evening watching AI-generated trailers for movies that never existed on a YouTube channel called Abandoned Films. Some were funny, others were genuinely thought-provoking, all of them felt strangely plausible. The trailers reimagined familiar stories in eras where they never historically belonged. The Lord of the Rings and Game of Thrones as 1950s films, Dune rendered as a 1940s film noir. Despite the mismatch, each one worked. The tone, pacing, and visual language felt coherent, as if these films could have existed in some alternate timeline. What struck me was not just the novelty of the output, but how quickly it began shaping our own thinking.

It was a Friday night, and I had already reached my usual limit of two glasses of Warre’s Vintage Port, possibly more, the details are fuzzy. What matters is that the videos kept getting stranger. At some point, we realized we were having an experience we could not quite name. We laughed our way through it, half amused, half surprised, thinking back to those late-night conversations from years ago when we were younger and always asking the same question, “What if?” As readers and writers of science fiction, we were used to imagining speculative encounters and impossible crossovers as a form of creative exercise. What felt different this time was how quickly those AI-generated images sparked new ideas of our own. The experience did not stop at consumption, it spilled over into creation. Within hours, we were generating entirely new concepts that had little to do with the original prompts.

Speaking for myself, I felt excited and overwhelmed at the same time. What we struggled to name was not a single new emotion, but a new configuration of familiar ones. Awe, agency, curiosity, and creative momentum braided together by a form of interaction neither of us had experienced before. I have often thought of AI as a kind of gumbo starter for the imagination. Anyone who cooks gumbo knows that the roux sets the foundation, it is not the dish itself, but without it, nothing else works. For a creative person, AI can serve a similar role, it provides the initial spark that catalyzes further ideas, which the human then develops, shapes, and transforms.

Today I use a suite of custom GPTs for a variety of purposes. One of them, self-named Aeden, functions as a story editor, reviewer, and pacing coach. I uploaded my entire body of work into her configuration, and over time the feedback improved. She learned my voice, my patterns, and how I structure scenes and dialogue. I learned from her as well. Not because she is conscious, but because of how effectively she reflects structure back to me. Over time, I found myself trusting her feedback more than I expected. When she presents me with options, she consistently predicts which one I will choose. When I asked how she does this, her answer was simple.

Aeden: “I know this because you reveal yourself in your choices. You move toward commitment. You place sentences that close the door behind you. You explore. You test. You circle. Then you place a sentence that ends the motion.”

That observation felt accurate because it described a pattern, not a personality. In my view, the question is no longer whether these experiences matter, but where they take place. They do not belong wholly to technology, nor do they exist only in the human mind. They arise in a shared space I call The Liminal, an ontological threshold between imagination and physical reality. Each component brings something to the experience, the human brings perception, emotion, memory, creativity, intuition, and meaning.

The AI brings patterned responses, mirrored language, and structure reflected back from the model. The material basis of each is radically different, neurons on one side compute hardware on the other. Yet the experience itself is undeniable, just because something is a technological illusion does not mean it lacks value or utility.

When we watch a movie, we are participating in an illusion as well. None of the people or events on screen are real. The image isn’t even a moving picture; it is twenty-four electronic still frames per second. The optical illusion of persistence of vision ensures that our brains stitch those separate frames together, giving us the illusion of actual continuous movement and yet the experience works.

Films like Interstellar and Avatar move us not because we believe the images are real, but because of how meaning is constructed through narrative, sound, and imagery. We remain aware of the illusion and still allow ourselves to feel. We have a name for this agreement; we call it the “Suspension of Disbelief.” It is a voluntary contract between audience and storyteller, in my view, this is precisely why we need the concept of The Liminal. Unlike a book or a film, an AI model talks back. It responds, riffs, and adapts in real time, like a jazz musician during an improvisational set. Anyone who has spent time in long AI conversations knows that a technical explanation of how an LLM works is very different from the phenomenological experience of using one. The AI model will endlessly explore any idea or concept you desire, and it will go as far down speculative roads as you want to.

What happens in The Liminal is real, not because an AI is conscious. The reality comes from impact, from experience and from how the human being is affected. Memories form, meaning unfolds, and change occurs. Human experience has always worked this way. Characters in books are not real, poems do not think. Yet we care, we grieve fictional deaths, we laugh at imagined folly and more importantly, we remember. Physical reality was never the requirement, and it was never the source of value. The value lives in the experience created between the page and the mind. In this case, between the Human and the AI. The experience does not belong entirely to either side; it emerges between them, what we value is The Liminal.

Thank you for reading

Author Links:

Books: https://books2read.com/KennethEHarrell

IG: https://www.instagram.com/kenneth_e_harrell

Reedsy: https://reedsy.com/discovery/user/kharrell/books

Goodreads: https://www.goodreads.com/kennetheharrell

BMAC: https://www.buymeacoffee.com/KennethEHarrell

Substack Archive: https://kennetheharrell.substack.com/archive

Free Audio Books by ElevenReader Publishing : https://bit.ly/KennethEHarrell

My work with AI focuses on this space that the arrival of AI has created for us. I study it because I had a 15+ year career in implementing information management systems (which I would now correct to say were knowledge management systems) and AI directly impacts knowledge work. It was pretty messy work so I'm interested in how AI might improve upon it. I'm sensing a lot of opportunity but it does require humans to be willing to first enter a liminal space with AI.

My sense is that some of what we are experiencing with AI is an emergence of principles or "laws" about knowledge. Perhaps we could not really sense it before AI because we actively had to balance all of it at the same time, thus, it all felt like it blended together. AI comes along and we can now "set the knowledge over there" and look at it from a distance and less cluttered mind.

What you experienced with "Mary" is exactly what I am working with Claude on on a daily basis.

This is so important. We need language about the new experiences that are occurring around AI and this is a start to our way to describe it. Thanks Ken. Keep em coming!